On a new installation of a SQL Server instance, by default, SQL Agent will automatically manage the job history retention, however this may not work the way you expect it to. This post explains why, and how you should set this up for best results.

When you start digging into this however, all may not be as you expect. To explain why, I will split this into the two sections shown on the GUI.

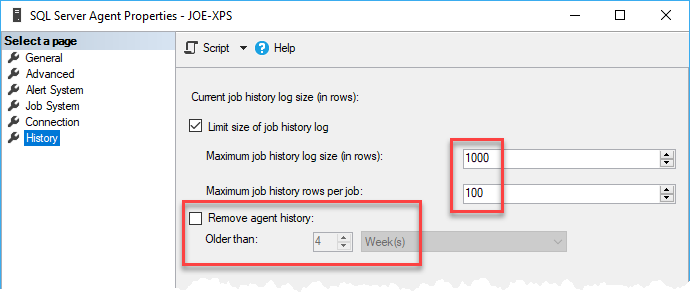

Current job history log size

By default, SQL Agent will keep up to 1000 rows of job history, and up to 100 rows per job. If you think about the scaling of this, 1000 rows in the dbo.sysjobhistory table is less than 1MB of storage, which is tiny. The overall job state is recorded as well as each individual step, so even for a single-step job by default you only get 50 executions kept, so if this job was running every 15 minutes you only get just over 5 days of history (although the rows per job limiter would bring this down to under 13 hours). As soon as you have a normal complement of jobs on the instance you will not be seeing any history on some jobs, as they will be getting aged out as the volume is too high.

So, you could whack this up to a larger number (999,999), but we tend to be interested in a time-based approach such as the history over the last week or month, and not an arbitrary number of rows. All it takes is to have enough databases log-shipping, and the history records rapidly increase which will cause the less frequently run jobs to lose all history.

But there is another compelling reason not to use the built-in job history controls, and that is there is contention in the code which is used. If you enable the row limits, then as part of every single job execution the job history is maintained. It is not done on a separate schedule, but as part of every history call.

When a job completes, a stored procedure (dbo.sp_agent_log_job_history) is called to record the job history, which in turn calls the stored procedure dbo.sp_sqlagent_log_jobhistory.

This procedure has the following code block:

-- Delete any history rows that are over the registry-defined limits

IF (@step_id = 0) --only check once per job execution.

BEGIN

EXECUTE msdb.dbo.sp_jobhistory_row_limiter @job_id

ENDAnd it is dbo.sp_jobhistory_row_limiter which causes the problems.

After first checking in the registry to see if history rows need to be maintained, it then starts a transaction with the following code:

BEGIN TRANSACTION

SELECT @current_rows_per_job = COUNT(*)

FROM msdb.dbo.sysjobhistory with (TABLOCKX)

WHERE (job_id = @job_id)The key part here is TABLOCKX. Even though the query is filtered down to the one job, this will take and hold exclusive locks across the entire table, for the duration of the transaction. Once the locks are in place then it deletes rows to maintain the per job limit, and then deletes to maintain the total rows in history. Then it commits and releases the locks.

So when you get a number of jobs completing at the same time, they are all contending for exclusive table locks on the dbo.sysjobhistory table and each job cannot record as completed until it gets the locks. We have seen this as an issue especially when there are a large number of log-shipped databases, where they are all blocked and waiting to maintain the job history. We have customers where 1000s of databases are log-shipping, and you can imagine how much contention and delay this causes. It gets to the point where schedules are getting missed as the previous run is still blocked trying to record the job history, as it needs to acquire an exclusive table lock to maintain the history. To be clear, this has nothing to do with how long the jobs are taking to run, it is down to being unable to record the completion status in the history table.

As a DBA, I would prefer to know that when investigating a job failure I will have sufficient history rows to see how it has been behaving. With the built-in limits that is not guaranteed as it is always the oldest records that get purged so just by having a few busy jobs, this can delete the history I need. Also I want maintenance to have minimal impact, and not disrupt the normal running of the jobs. I would also prefer to have control over when the maintenance is performed, and run it at a quieter point in the day.

Removing agent history

This is where the dialog does not obey the standard GUI rules people are accustomed to. Removing history is controlled by a checkbox, so you expect that when you tick it then it will remain in that state, and would maintain the amount of history based on the range specified. But this is not what it does.

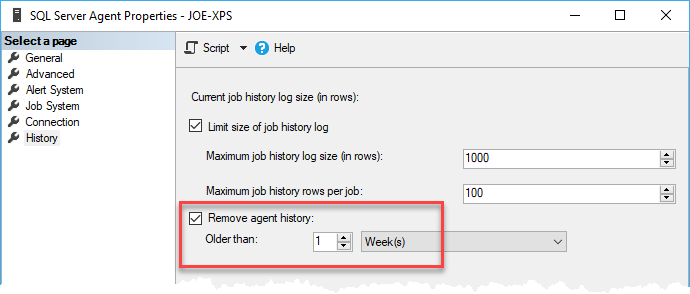

If I set the checkbox and choose a one week retention, and then press OK:

What happens is a one-off call to the purge history stored procedure which will look like:

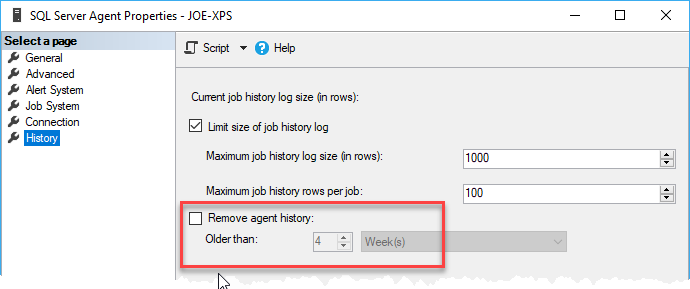

EXEC msdb.dbo.sp_purge_jobhistory @oldest_date='2018-02-07T16:06:46'And then if you re-open the dialog, you will see that the checkbox is now unchecked:

So this is not a persisted option, and instead of a checkbox think of this as a standard button. Which means using this built-in dialog you can only automatically control the history by specifying the overall number of rows, which is less useful and as we have seen, less efficient that just using the dbo.sp_purge_jobhistory stored procedure which allows us a time-based approach.

Summary

Due to the limitations and potential performance issues of using the built-in options of maintaining job history, I always prefer to disable this (by simply unchecking the checkbox) and instead create a daily job to call the purge history stored procedure. Not complicated to do, as an example to maintain a 31 day history you can make a job containing:

DECLARE @Cleanup datetime;

SET @Cleanup = DATEADD(day, -31, CURRENT_TIMESTAMP);

EXECUTE msdb.dbo.sp_purge_jobhistory @oldest_date = @Cleanup;And run it once a day at a time of your choosing.

You can also write your own bespoke code, as the dbo.sysjobhistory table is not restricted and you can manually delete from it. We have had customers with hundreds of high volume jobs and we needed to maintain a overall month of history, but for the busier jobs just keep one week. We managed this by writing our own code to do the deletes on this basis, which solved the problem. But this is an edge case, for most scenarios the above code is all you need.